SaaS Support Automation: From Ticket Hell to Self-Service

Most $10M-$40M ARR B2B SaaS support orgs are running 2018 architecture against 2026 economics. A shared inbox, a Zendesk instance, a CSM team that doubles as tier-1 support, a help center nobody updates, a chatbot that escalates 80% of conversations to humans within two replies. The result: cost per ticket creeps from $20 to $35, support cost crosses 12% of ARR, and the only available scaling lever is to hire more agents, the same trap scaling revenue without scaling headcount diagnoses across the rest of the operations stack. That is ticket hell. SaaS support automation is the engineered exit. This guide installs it as a five-layer operating system, not a chatbot project.

Built for Sarah Chen at $10M-$40M ARR. Operations pillar. The objective is not "AI in support." The objective is to compound deflection, agent leverage, and proactive resolution into a system where ticket volume decouples from customer count and support cost compresses while CSAT rises. The discipline is the same one that anchors B2B customer retention strategy: engineered systems, not heroics.

41.2%

Median tier-1 AI deflection rate (2026)

Digital Applied 2026

58.7%

Top-quartile AI deflection rate

Digital Applied 2026

$30-$60

B2B SaaS cost per ticket (human-handled)

Supportbench 2026

7.3x

Self-service cost advantage ($1.84 vs $13.50)

Screenbuddy 2026

The economics of ticket hell

Before the architecture, the math. A B2B SaaS at $20M ARR with 1,000 customers running unautomated support typically generates 4 to 8 tickets per customer per year. At a midpoint of 6 tickets per customer, that is 6,000 tickets annually. At Supportbench's 2026 B2B cost-per-ticket benchmark of $30 to $60, that is $180K to $360K in pure support delivery cost, before tooling, before the loaded cost of CSMs absorbing tier-2 escalation, before the opportunity cost of senior engineers debugging integration issues. Layer in Count.co's 2026 contact-cost benchmark range of $15-$45 for B2B SaaS, and the picture is consistent: support cost scales linearly with customer count unless something breaks the linearity.

The thing that breaks linearity is automation. Screenbuddy's 2026 self-service economics analysis documents the multiplier: human-assisted contact at $13.50 vs self-service contact at $1.84, a 7.3x cost advantage per resolved query. Digital Applied's 120+ data point CX agent statistics 2026 documents median tier-1 AI deflection at 41.2% across enterprise CX programs, with the top quartile clearing 58.7% and the leading vendors (Klarna, Shopify) reporting 70%+ on routine queries.

The Support Hell Test

If your support cost is over 10% of ARR, your ticket volume scales linearly with customer count, and your CSMs spend over 30% of their time on tier-1 reactive support, you are running ticket hell. The exit is the five-layer architecture below, not another agent hire.

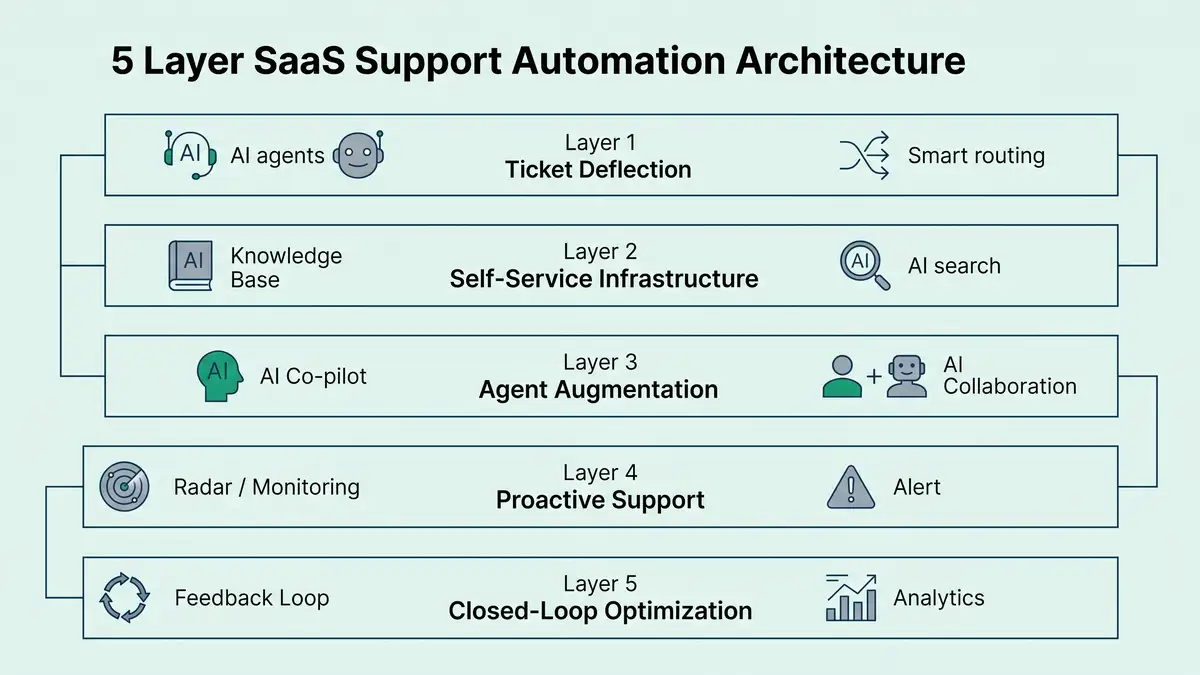

The five-layer support automation architecture

A defensible support automation system has five layers, each with its own metrics, owners, and intervention playbooks. Skip a layer and the others compound less. Most failed "AI support" projects are layer-1 chatbot deployments wearing the costume of full-stack automation.

Layer 1: Ticket deflection

The front door. AI agents (Intercom Fin, Zendesk AI agents, Ada, Decagon, Salesforce Agentforce) intercept incoming queries before they hit a human. Modern LLM-powered agents resolve 40-70% of routine queries autonomously, vs 20-30% for the rule-based chatbots that defined the 2020-2023 generation. The metric: deflection rate (autonomously resolved / total inbound), targeted at 40%+ healthy and 60%+ top-quartile by 2026. Failure mode: routing-to-human-after-greeting, which is deflection theater, not deflection.

Layer 2: Self-service infrastructure

The knowledge base, in-app guidance, AI search. The substrate the AI agents draw from and the channel customers prefer when they want to solve their own problem. Top-quartile B2B SaaS achieves 30-50% deflection through this layer alone before the AI agent even fires. The metric: self-service success rate (% of help-center sessions that end without contacting support). Failure mode: outdated help center, no AI search, articles written for the company instead of the customer.

Layer 3: Agent augmentation

AI co-pilot for the human support agents who handle escalations and complex tier-2/tier-3 cases. Drafted replies, automatic ticket summaries, suggested macros, semantic search across past tickets. Adoption of Zendesk in AI-first SaaS support stacks runs around 35% per Useparagon's 2026 integration analysis, and the augmentation layer typically lifts agent productivity 20-40% (handle time, FCR, CSAT). The metric: average handle time (AHT), first contact resolution (FCR). Failure mode: deploying co-pilot with no agent training and watching agents distrust the suggested replies.

Layer 4: Proactive support

The shift from reactive to proactive. In-product alerts, anomaly detection on customer telemetry, automated outreach when a customer is about to hit an error or rate limit, status-page integrations, churn-risk-triggered support touch tied directly to SaaS churn analysis signals. Customer health scores feed this layer. The metric: contained issues (problems resolved before a ticket was created). Failure mode: spamming customers with proactive notifications they did not ask for, eroding trust.

Layer 5: Closed-loop optimization

The analytics layer that ensures the other four compound rather than stagnate. Automatic ticket categorization, voice-of-customer surfacing, deflection-rate diagnostics by topic, knowledge-base gap detection, weekly playbook recalibration. Without this layer, the system ships once and decays. The metric: % of new ticket categories surfaced and routed within 7 days of first occurrence. Failure mode: ship the system, never look at the data.

The 2026 platform stack

Tooling does not produce automation, the layered architecture does. But the right stack accelerates everything. Below is the 2026 stack for $10M-$40M ARR B2B SaaS, sourced from Build MVP Fast's April 2026 platform comparison, Eesel's 2026 Agentforce pricing breakdown, and Fin AI's 2026 platform guide.

| Tier | Core stack | Annual cost (1k customers) | Best fit |

| SMB / scrappy ($1M-$10M ARR) | HubSpot Service Hub OR Help Scout + Intercom Fin add-on, AI search via native | $18K-$45K | Under $10M ARR, <500 customers |

| Mid-market ($10M-$40M ARR, Sarah Chen tier) | Zendesk Suite + Zendesk AI agents OR Intercom + Fin, Help Scout for community/email, Pendo or Heap for in-app | $60K-$140K | $10M-$40M ARR, 500-3,000 customers |

| Enterprise ($50M+ ARR) | Salesforce Service Cloud + Agentforce OR Zendesk Enterprise + Ada/Decagon, full closed-loop analytics | $250K-$1M+ | Multi-segment, multi-region, $50M+ ARR |

Sources: Build MVP Fast 2026, Eesel 2026 Agentforce, Fin AI 2026, Giva Top 16 CS Software 2026.

Per-resolution pricing has hardened as the dominant commercial model for AI agents. The AI Corner's 2026 SaaS Defense Playbook documents the anchor: Intercom bundles 50 free Fin resolutions per month on the base tier and charges $0.99 per additional resolution; Salesforce Agentforce bundles 1 million credits on the top edition. Compared to a $30-$60 human-handled ticket, the math is straightforward, and that math is what makes 2026 the year that mid-market B2B SaaS flips from "we're piloting AI support" to "AI agents are the default first-touch."

The benchmark table

Without numbers, "good support automation" is theatre. Here is what 2026 data actually says about B2B SaaS support performance, drawn from the validated sources cited throughout.

| Metric | Healthy | Top-Quartile | Alarming |

| AI agent deflection rate (tier-1) | 40-50% | 58%+ | under 25% |

| Self-service deflection (help center) | 30-45% | 50%+ | under 20% |

| Cost per ticket (B2B SaaS, blended) | $15-$30 | under $12 | over $45 |

| Cost per self-service interaction | $1-$3 | under $1 | over $5 |

| Support cost as % of ARR | 5-9% | under 5% | over 12% |

| Average handle time (AHT, escalated) | 10-20 min | under 8 min | over 30 min |

| First contact resolution (FCR) | 70-80% | 85%+ | under 60% |

| CSAT (post-resolution) | 85-92% | 95%+ | under 78% |

| Time-to-first-response (escalated) | under 1 hour | under 15 min | over 4 hours |

| Ticket volume per customer per year | 3-5 | under 3 | over 7 |

Sources: Digital Applied 2026, Supportbench 2026, Count.co 2026, Screenbuddy 2026.

The structural insight: top-quartile programmes drive cost-per-ticket below $12 not by hiring cheaper agents but by deflecting 60%+ of tier-1 to self-service and AI, leaving the human team focused on the 30-40% of complex tickets that still need them. Agentic workflows are the load-bearing technology behind that compression.

The seven-step support automation install

A repeatable methodology, derived from the operational patterns that distinguish top-decile support automation programmes from average ones.

Categorize the existing ticket base

Pull 90 days of tickets, run unsupervised clustering or LLM-based classification to surface the top 10-20 ticket categories by volume. Tier-1 deflection candidates: how-to questions, password resets, billing queries, basic configuration. The categorization is the foundation. You cannot deflect what you have not measured.

Build the help-center substrate first

For the top 20 ticket categories, write or rewrite help-center articles that resolve 90%+ of the variants. Articles should be customer-language, not engineering-language. AI search (Algolia, native Zendesk/Intercom) on top of this substrate produces 30-50% layer-2 deflection before any AI agent activates. Skipping this layer is the single most common reason "the chatbot doesn't work."

Deploy AI agent against the top categories first

Configure Intercom Fin, Zendesk AI agents, or Ada to handle the top 5 ticket categories. Run 4 weeks at 100% routing-to-human as control, then flip to AI-first with human escalation. Measure deflection rate, CSAT delta, and resolution-time delta. Expand to next 5 categories only after the first 5 hit 40%+ deflection at parity-or-better CSAT. Intercom's 2026 customer service trends documents the playbook: graduated rollout, not big-bang.

Layer in agent augmentation

Roll out AI co-pilot for the human team handling escalations: drafted replies, ticket summaries, semantic ticket search. Train the team on when to trust suggestions and when to override. Measure AHT, FCR, agent NPS. Expect 20-40% productivity lift in the first 60 days, with diminishing returns after the team's workflow stabilizes around the augmentation.

Wire in proactive support signals

Connect product telemetry to the support layer, the same instrumentation that customer success metrics use for health scoring. When a customer hits a 5xx error, a rate limit, or a known broken edge case, fire an in-app message or proactive email with the resolution. Layer customer health scoring to surface at-risk accounts to CSM/support before the customer escalates. This layer typically eliminates 10-20% of tickets that would have been raised, while improving CSAT.

Close the loop with analytics

Weekly review: what new ticket categories appeared, what deflection rates are trending, where AI agent CSAT is dropping, which help-center articles are stale. Auto-route surfaced categories to the playbook owner within 7 days of first occurrence. Zendesk's CX Trends 2026 documents this discipline: programmes that recalibrate weekly compound deflection 8-15 points faster than those that ship and stagnate.

Reframe the support team's mission

From "ticket handlers" to "escalation specialists, knowledge curators, and customer advocates." Promote senior agents to AI-agent oversight, knowledge-base curation, and proactive customer outreach. Top-decile programmes redeploy 30-50% of saved agent capacity into expansion conversations and proactive customer success, converting support cost into expansion revenue, with the lift compounding into net revenue retention.

Six failure patterns that destroy support automation programmes

Failure 1: The chatbot-as-strategy fallacy

Deploying an AI agent without first investing in the help-center substrate produces a chatbot that has nothing to draw from. Result: 15-25% deflection at best, customers frustrated, the project is rolled back, and the org concludes "AI doesn't work for us." The substrate is the precondition.

Failure 2: Hiding the human escape hatch

Some teams configure AI agents to make it almost impossible to reach a human. Customer-perceived deflection collapses, CSAT drops 15-20 points, retention erodes. The right configuration: AI-first, but a clear, fast path to human after 1-2 unsuccessful AI exchanges. Deflection should be earned, not enforced.

Failure 3: Optimizing for deflection rate, not resolution rate

Deflection rate measures "did the AI handle this end-to-end without escalation?" Resolution rate measures "did the customer's problem actually get solved?" Programmes that anchor only on deflection produce high-deflection, low-resolution chatbots that customers route around (calling sales, posting on Twitter, churning quietly). Track both.

Failure 4: Skipping agent augmentation

Pouring 100% of investment into AI deflection while ignoring the human team that handles escalations is short-sighted. The escalations are where the high-value resolution work happens. Without augmentation, AHT stays flat, FCR stagnates, and the team burns out on the increasingly complex residual ticket pool.

Failure 5: No closed-loop analytics

Shipping the architecture without a weekly recalibration cadence guarantees decay. New ticket categories emerge, AI agent quality drifts on edge cases, knowledge-base articles go stale. Programmes that ship support automation as a project (not a system) lose 8-12 percentage points of effectiveness annually.

Failure 6: Support team treated as cost centre, not capacity engine

The strategic upside of support automation is not just lower cost. It is the redeployment of human capacity into expansion conversations, customer success, and proactive outreach that drives NRR. Programmes that automate support without restructuring the team's mission capture only 50% of the available value.

The 90-day install plan

Days 1-30: Diagnose and substrate

Categorize 90 days of tickets, identify top 10-20 categories. Audit existing help center, identify gaps. Write or rewrite top 20 help-center articles. Stand up AI search on the help center. Define metrics dashboard (deflection rate, CPT, CSAT, AHT, FCR). Output: substrate ready, baseline measurements established.

Days 31-60: AI agent + augmentation

Configure AI agent (Intercom Fin, Zendesk AI, Ada) against top 5 ticket categories. Run 2 weeks A/B against human-only baseline. Roll out agent co-pilot to escalation team. Train team on AI agent override patterns. Output: AI deflection live for top 5 categories at 40%+, human team augmented.

Days 61-90: Proactive + closed-loop

Wire product telemetry into proactive support triggers. Layer customer health score signals. Stand up weekly analytics review with documented playbook owner. Expand AI agent coverage to next 5 categories. Output: full five-layer architecture live, weekly recalibration cadence in place.

What "good" looks like at 12 months

- AI agent deflection rate: 40-55% on routine tier-1 (vs 0-15% baseline).

- Self-service deflection: 35-50% (vs 15-20% baseline).

- Combined deflection (AI + self-service): 60-75% of tier-1 inbound.

- Cost per ticket (blended): under $20 (vs $30-$45 baseline).

- Support cost as % of ARR: under 7% (vs 10-12% baseline).

- CSAT: 88-93% (vs 82-85% baseline) — automation done right lifts CSAT, doesn't degrade it.

- Average handle time (escalated): 30-40% reduction.

- 30-50% of saved agent capacity redeployed into proactive customer success / expansion.

Those deltas are the natural compounding of installing the five-layer architecture and recalibrating weekly. Salesforce's 2026 State of Service reinforces the broader pattern: programmes with AI-first support architecture compound CSAT and cost-efficiency 8-15 points faster than those running tactical chatbot pilots without the underlying system. The same systemic discipline that defines customer success automation applies here, and the two layers reinforce each other in retention compounding.

Want a diagnostic on whether your support architecture is decoupling cost from customer count, or just shifting it around?

FAQ

What is SaaS support automation?

SaaS support automation is the engineered system that resolves customer support inquiries without proportional human effort. Modern stacks layer AI agents (Intercom Fin, Zendesk AI, Ada, Salesforce Agentforce) on top of self-service infrastructure, agent co-pilot tools, proactive support signals, and closed-loop analytics. Best-in-class B2B SaaS implementations deflect 60-75% of tier-1 tickets, cut cost-per-ticket to under $20, and lift CSAT simultaneously. The objective is to decouple support cost from customer count, freeing human capacity for high-value escalations and proactive outreach.

How much does SaaS support automation cost in 2026?

For $10M-$40M ARR B2B SaaS (1,000-3,000 customers): annual platform cost runs $60K-$140K covering Zendesk Suite + Zendesk AI agents (or Intercom + Fin), agent augmentation tools, and analytics. Per-resolution pricing has emerged as the dominant model: Intercom Fin charges around $0.99 per AI resolution, Salesforce Agentforce uses credit bundles. Compared to $30-$60 human-handled tickets, the unit economics are compelling. Total programme cost typically pays back in 6-12 months through deflection-driven savings.

What is a good ticket deflection rate?

For 2026 B2B SaaS: median tier-1 AI deflection runs 41.2%, top quartile clears 58.7% (Digital Applied 2026). Below 25% deflection signals architecture problems (weak help-center substrate, poorly-tuned AI agent, no analytics loop). Top performers like Klarna's OpenAI integration handle 70%+ of tier-1, equivalent to hundreds of FTE-equivalent productivity per system.

How do you reduce SaaS support tickets?

Install the five-layer system: ticket deflection (AI agents), self-service infrastructure (help-center + AI search), agent augmentation (co-pilot), proactive support (telemetry-triggered outreach), closed-loop optimization (weekly recalibration). Categorize the existing ticket base, build the help-center substrate first, then deploy AI agents against top categories. Top-quartile programmes cut ticket volume per customer per year from 7+ to under 3 within 12 months.

What is the difference between a chatbot and an AI agent?

Chatbots are rule-based, follow scripted decision trees, achieve 20-30% containment, and frustrate customers when their query falls outside the script. AI agents are LLM-powered, reason over the customer's full context plus the help-center substrate, achieve 40-70% autonomous resolution, and handle out-of-script queries by routing intelligently. The 2024-2026 generation (Intercom Fin, Zendesk AI agents, Ada, Decagon) represents a step-change in capability vs the 2018-2023 chatbot generation.

Will support automation kill CSAT?

Not when implemented correctly. Top-quartile programmes lift CSAT 5-10 points after automation deployment because customers receive instant resolution to routine queries (vs hours of waiting), and the human team is freed to provide deeper attention on complex escalations. CSAT degrades only when programmes hide the human escape hatch, optimize for deflection over resolution, or deploy AI agents on top of a weak help-center substrate. The architecture matters more than the technology.

How do AI agents like Intercom Fin and Zendesk AI compare?

Intercom Fin charges per resolution (~$0.99/resolution after 50 free per month on base tier), excels at conversational tone and product-specific reasoning. Zendesk AI agents are bundled into Zendesk Suite + AI add-on, integrate deeply with the Zendesk knowledge base, and excel at large-scale ticket workflows. Salesforce Agentforce uses credit bundles, suits enterprises already on Service Cloud. Ada and Decagon are specialist AI agent layers that sit on top of any helpdesk. Selection depends on existing stack, ticket volume, pricing tolerance, and brand voice requirements.

What is the ROI of support automation for B2B SaaS?

Typical 12-month payback: $80K-$140K annual platform cost returns $300K-$600K in support cost savings on a $20M ARR base, with CSAT up 5-10 points and 30-50% of agent capacity redeployed into expansion / proactive support. At a 5x revenue multiple, the secondary effect (NRR uplift from redeployed capacity) often exceeds the direct cost savings, making support automation one of the highest-ROI operations investments available to mid-market B2B SaaS in 2026.

Architect SaaS support automation that compounds deflection, leverage, and CSAT, not just chatbot deployments.

peppereffect installs end-to-end support operating systems for $10M-$40M ARR B2B SaaS: the five-layer architecture, the help-center substrate, the AI agent layer, the augmentation tools, the proactive support telemetry, the closed-loop analytics. Logic-gated execution, not retainer hours.

Resources

- Digital Applied, Customer Service AI Agent Statistics 2026 (120+ Data Points)

- Supportbench, How to Calculate the Real Cost per Ticket

- Count.co, Support Cost per Contact: Formula & Benchmarks 2026

- Build MVP Fast, Best AI for Customer Support April 2026

- Eesel, Is Salesforce Agentforce Worth The Cost: 2026 Pricing Breakdown

- Fin AI, 10 Best AI Agents for Customer Service in 2026

- The AI Corner, SaaS Defense Playbook AI Era 2026

- Screenbuddy, Screen Recording for SaaS: Self-Service Cost Economics

- Useparagon, How Top AI Companies Leverage Integrations

- Giva, Top 16 Customer Service Software 2026

- Kayako, Best Ticketing System 2026: 15 Tools Reviewed

- Zendesk, CX Trends Report 2026

- Intercom, Customer Service Trends 2026

- Salesforce, State of Service 2026

- Gartner, Customer Service & Support Predictions 2026

- HubSpot, Customer Service Statistics 2026

- Help Scout, Customer Service Cost Statistics

- Klarna, AI Assistant Handles Two-Thirds of Customer Service Chats